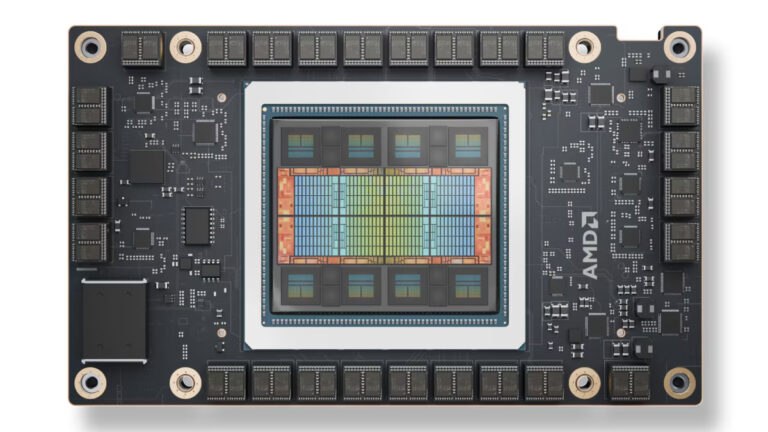

On Thursday, AMD announced its new MI325X AI accelerator chip, which is set to roll out to data center customers in the fourth quarter of this year. At an event hosted in San Francisco, the company claimed the new chip offers “industry-leading” performance compared to Nvidia’s current H200 GPUs, which are widely used in data centers to power AI applications such as ChatGPT.

With its new chip, AMD hopes to narrow the performance gap with Nvidia in the AI processor market. The Santa Clara-based company also revealed plans for its next-generation MI350 chip, which is positioned as a head-to-head competitor of Nvidia’s new Blackwell system, with an expected shipping date in the second half of 2025.

In an interview with the Financial Times, AMD CEO Lisa Su expressed her ambition for AMD to become the “end-to-end” AI leader over the next decade. “This is the beginning, not the end of the AI race,” she told the publication.