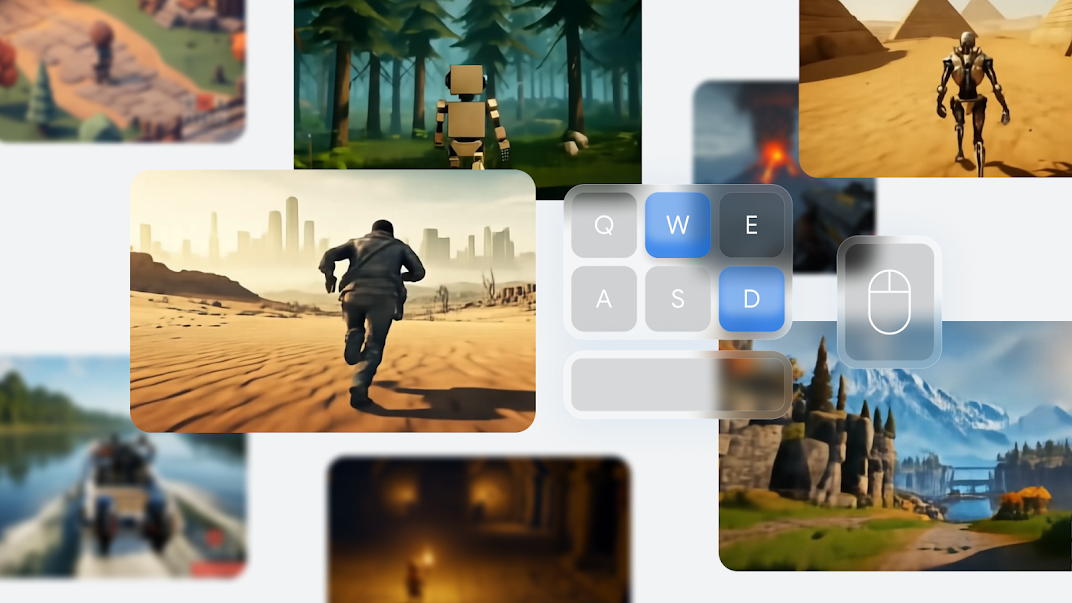

In March, Google showed off its first Genie AI model. After training on thousands of hours of 2D run-and-jump video games, the model could generate halfway-passable, interactive impressions of those games based on generic images or text descriptions.

Nine months later, this week’s reveal of the Genie 2 model expands that idea into the realm of fully 3D worlds, complete with controllable third- or first-person avatars. Google’s announcement talks up Genie 2’s role as a “foundational world model” that can create a fully interactive internal representation of a virtual environment. That could allow AI agents to train themselves in synthetic but realistic environments, Google says, forming an important stepping stone on the way to artificial general intelligence.

But while Genie 2 shows just how much progress Google’s Deepmind team has achieved in the last nine months, the limited public information about the model thus far leaves a lot of questions about how close we are to these foundational model worlds being useful for anything but some short but sweet demos.