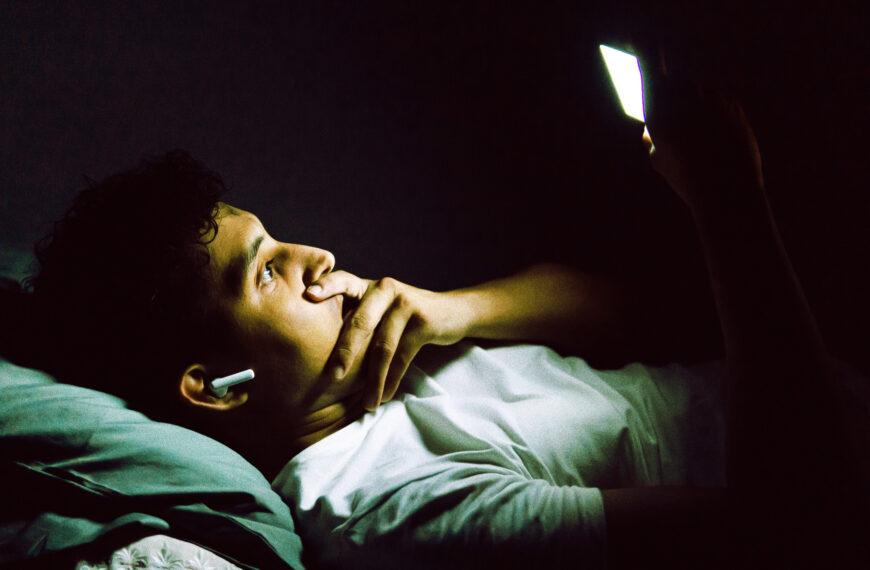

Bad things happen when an AI chatbot latches onto one of your neuroses. The infamously sycophantic machines are driving many people into hypochondriac-like spirals, causing them to obsess over their health and convince themselves that they may suffer from deadly afflictions.

46-year-old George Mallon in Liverpool, England, told The Atlantic of how he spent hours everyday talking to ChatGPT after the preliminary results of a blood test suggested he might have blood cancer. Rather than soothing his anxieties, it supercharged them.

“It just sent me around on this crazy Ferris wheel of emotion and fear,” Mallon told the magazine, in a provocative feature about the phenomenon.

Follow-up tests confirmed Fallon didn’t have cancer, but he couldn’t stop talking to his newfound confidante. It was that addictive.

“I couldn’t put it down,” Mallon said. He lamented that the chatbot didn’t include measures to cut off his clearly unhealthy usage.

“I must have clocked over 100 hours minimum on ChatGPT, because I thought I was on the way out,” he told the magazine. “There should have been something in there that stopped me.”

The reporting describes how online communities dedicated to health anxiety are now dominated by people’s conversations with AI chatbots. Some say the AI helps, but many say it only causes them to spiral further.

Neither outcome is ideal. Four therapists that The Atlantic spoke to say that more of their clients are using AI chatbots to try manage their health anxiety, and that they fear this is encouraging constant reassurance-seeking. This goes against how therapists try combat obsessive-compulsive disorder (OCD) and other compulsive behavior, which is predicated on fostering self-trust and accepting uncertainty, the reporting notes. Having an AI constantly in your ear to hear out these health anxieties, even if it feels soothing in the moment, doesn’t address the underlying cause and in fact makes it worse.

“Because the answers are so immediate and so personalized, it’s even more reinforcing than Googling. This kind of takes it to the next level,” Lisa Levine, a psychologist specializing in anxiety and OCD, told The Atlantic.

AI driving health anxieties is just one facet of the mental health dangers posed by obsequious chatbots. In the past year, there’s been increased attention on the phenomenon of so-called AI psychosis, the term that some experts are using to describe delusional spirals and sometimes full-blown breaks with reality caused by extensive interactions with an AI chatbot or companion. Some users, many of them teenagers and young adults, have taken their own lives after befriending an AI to which they confide suicidal thoughts. Over half a dozen wrongful death lawsuits have been filed against OpenAI, many centering on its GPT-4o model for ChatGPT, which was particularly sycophantic. Despite the increased attention on its tech’s safety, OpenAI released a medically focused model, ChatGPT Health, in January, which asks users to upload their medical documents and other private health information.

When Atlantic reporter Sage Lazarro tried discussing her health with ChatGPT, it immediately earned “its reputation for sycophancy.” The bot continually flattered her and prompted her to ask follow-up questions to keep the conversation going. “In one of the exchanges where I continuously prompted ChatGPT with worried questions, only minutes passed between its first response suggesting that I get checked out by a doctor to its detailing for me which organs fail when an infection leads to septic shock,” she wrote.

Lazarro vowed to “never again” use the AI, but not all of us have such conviction. When Mallon first spoke to the reporter, he said he was “seven months sober” from talking to ChatGPT about his health. But when they spoke again months later, he admitted he’d briefly relapsed.

Recalling the height of his obsession, Mallon said he “talked to it like it was a friend.”

“I was saying stupid things like, ‘How are you today?’” he added. “And at night, I’d log off and go, ‘Thanks for today. You’ve really helped me.’”

The post ChatGPT Is Sending People Into Obsessive Spirals of Hypochondria appeared first on Futurism.